The following charts show different pieces of a sobering story: the US and the world has not and is not in the foreseeable future doing enough to reduce carbon-intensive energy. This shouldn’t come as any great surprise, but I think these charts enable us to look at the story graphically instead of just hearing the words. Graphics tend to have a larger impact on thought retention, so I’m going to use them to tell this story.

Figure 1. Annual global installations of new power sources, in gigawatts. [Source: MotherJones via BNEF]

This figure starts the story off on a good note. To the left of the dotted line is historical data and to the right is BNEF’s projected data. In the future, we expect fewer new gigawatts generated by coal, gas, and oil. We also expect many more new gigawatts generated by land-based wind, small-scale photovoltaic (PV) and solar PV. Thus the good news: there will be more new gigawatts powered by renewable energy sources within the next couple of years than dirty energy sources. At the same time, this graph is slightly misleading. What about existing energy production? The next chart takes that into account.

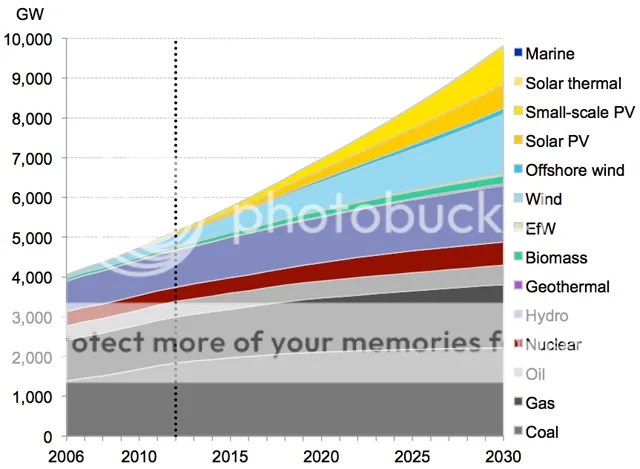

Figure 2. Global energy use by generation type, in gigawatts. [Source: MotherJones via BNEF]

The story just turned sober. In 2030, coal should account for ~2,000GW of energy production compared to ~1,200GW today. Coal is the dirtiest of the fossil fuels, so absent radical technological innovation and deployment, 2030 emissions will exceed today’s due to coal alone. We find the same storyline for gas and to a lesser extent oil: higher generation in 2030 than today means more emissions. We need fewer emissions if we want to reduce atmospheric CO2 concentrations. The higher those concentrations, the warmer the globe will get until it reaches a new equilibrium.

Compare the two graphs again. The rapid increase in renewable energy generation witnessed over the last decade and expected to continue through 2030 results in what by 2030? Perhaps ~1,400GW of wind generation (about the same as gas) and up to 1,600GW of total solar generation (more than gas but still less than coal). This is an improvement over today’s generation portfolio of course. But it will not be enough to prevent >2°C mean global warming and all the subsequent effects that warming will have on other earth systems. The curves delineating fossil fuel generation need to slope toward zero and that doesn’t look likely to happen prior to 2030.

Here is the basic problem: there are billions of people without reliable energy today. They want energy and one way or another will get that energy someday. Thus, the total energy generated will continue to increase for decades. The power mix is up to us. The top chart will have to look dramatically different for the mix to tilt toward majority and eventually exclusively renewable energy. The projected increases in new renewable energy will have to be double, triple, or more what they are in the top chart to achieve complete global renewable energy generation. Instead of a couple hundred gigawatts per year, we need a couple thousand gigawatts per year. That requires a great deal of innovation and deployment – more than even many experts are aware.

Let’s take a look at the next part of the story: carbon emissions in the US – up until recently the largest annual GHG emitter on the globe.

Figure 3. Percent change in the economy’s carbon intensity 2000-2010. [Source: ThinkProgress via EIA]

As Jeff notes, the total carbon intensity (amount of carbon released for every million dollars the economy produces) of the economy dropped 17.9 percent over those ten years. That’s good news. Part of the reason is bad news: the economy became more energy-efficient in part due to the recession. People and organizations stopped doing some of the most expensive activities, which also happened to be some of the most polluting activities. We can attribute the rest of the decline to the switch from coal to natural gas. Which is a good thing for US emissions, but a bad thing for global emissions because we’re selling the coal that other countries butn – as Figure 2 shows.

Figure 4. Percent change in the economy’s total carbon emissions 2000-2010. [Source: ThinkProgress via EIA]

Figure 4 re-sobers the story. While we became more efficient at generating carbon emissions, the total number of total emissions from 2000 to 2010 only dropped 4.2%. My own home state of Colorado, despite having a Renewable Energy Standard and mandates renewables in the energy mix, saw a greater than 10% jump in total carbon emissions. Part of the reason is Xcel Energy convinced the state Public Utilities Commission that new, expensive coal plants be built. The reason? Xcel is a for-profit corporation and new coal plants added billions of dollars to the positive side of their ledger, especially since they passed those costs onto their rate payers.

In order for the US to achieve its Copenhagen goals (17% reduction from 2005 levels), more states will have to show total carbon emission declines post-2010. While 2012 US emission levels were the lowest since 1994, we still emit more than 5 billion metric tons of CO2 annually. Furthermore, the US deliberately chose 2005 levels since they were the historically high emissions mark. The Kyoto Protocol, by contrast, challenged countries to reduce emissions compared to 1990 levels. The US remains above 1990 levels, which were just under 5 billion metric tons of CO2. 17% of 1990 emissions is 850 million metric tons. Once we achieve that decrease, we can talk about real progress.

The bottom line is this: it matters how many total carbon emissions get into the atmosphere if we want to limit the total amount of warming that will occur this century and the next few tens of thousands of years. There has been a significant lack of progress on that:

Figure 5. Historical and projection energy sector carbon intensity index.

We are on the red line path. If that is our reality through 2050, we will blow past 560 ppm atmospheric CO2 concentration, which means we will blow past the 2-3°C sensitivity threshold that skeptics like to talk about the most. That temperature only matters if we limit CO2 concentrations to two times their pre-industrial value. We’re on an 800-1100 ppm concentration pathway, which would mean up to 6°C warming by 2100 and additional warming beyond that.

The size and scope of the energy infrastructure requirements to achieve an 80% reduction in US emissions from 1990 levels by 2050 is mind-boggling. It requires 300,000 10-MW solar thermal plants or 1,200,000 2.5-MW wind turbines or 1,300 1GW nuclear plants (or some combination thereof) by 2050 because you have to replace the existing dirty energy generation facilities as well as meet increasing future demand. And that’s just for the US. What about every other country on the planet? That is why I think we will blow past the 2°C threshold. As the top graphs show, we’re nibbling around the edges of a massive problem. We will not see a satisfactory energy/climate policy emerge on this topic anytime soon. The once in a generation opportunity to do so existed in 2009 and 2010 and national-level Democrats squandered it (China actually has a national climate policy, by the way). I think the policy answers lie in local and state-based efforts for the time being. There is too wide a gap between the politics we need and the politics we have at the national level.